When Compute Becomes Currency, Ethics Becomes Physics

Quick Note

I’ve been a bit slack with the articles recently. It’s because I’m in review and improve mode on two new papers.

The first is the adaption of Epiplexity measure to the Ruliad and Observer Theory (will need a snappier title…) and the second is a paper on Decision Theory, which I think, having got some really positive feedback from my reviewers might just be really, really strong.

This one continues the Computational Ethics / Economics thread and builds on “the Grey Out”. This is based on some of the work on the Ethics section of the God Conjecture.

Starter for 10

Here is a question nobody in (traditional) economics can answer: when an AI performs a billion computations to make an output that shows up somewhere on a balance sheet, did it create real value, or did it just convert electricity into entropy?

GDP doesn’t tell us, it’s too far from the coal face. Profit margins don’t tell us. Market Cap doesn’t either.

Those metrics are all denominated in fiat currencies, where value is determined by collective agreement. They measure what we believe things are worth. They do not — cannot — measure what things cost the universe to produce, or what structure they added to it.

I think that’s about to change.

The economy is undergoing a transition more fundamental than any since the invention of money itself. We are moving from an economy denominated in human labor and priced in fiat to one denominated in computation and priced (partially and inescapably) in physics.

And physics has a quality that fiat lacks: it doesn’t negotiate.

This article argues that the transition to “compute-as-currency” makes a previously impossible measurement possible.

One side of the economic ledger, the cost of any productive action, acquires a thermodynamic floor. The other side, the value of the output, acquires a formal measure rooted in information theory. The ratio between them turns out to be, in a precise sense, identical to what moral philosophy has always called virtue.

The implications for AI alignment are clear. The mathematics of selfishness and the mathematics of virtue turn out to be the same equation.

I. The Currency of Intelligence

In October 2025, Greg Brockman (OpenAI COO) and Lisa Su (AMD CEO) announced a multiyear partnership to deploy hundreds of thousands of chips across OpenAI’s data centres — roughly 6GW of compute, three times the generating capacity of the Hoover Dam.

Su told Fortune what she found most striking about the negotiations: “What I love the most about working with Greg is he’s just so clear in his vision that compute is the currency of intelligence, and his just maniacal focus on ensuring there’s enough compute in this world.”

Currency.

That word carries weight. A currency must serve three functions: unit of account (you can price things in it), medium of exchange (you can trade it), and store of value (you can hold it). The claim that compute is the “currency of intelligence” implies all three: that we will increasingly price cognitive work in FLOPs, trade access to compute as a primary economic good, and treat compute capacity as capital.

This is already happening, in phases.

Phase one is underway. Inference costs are the dominant input cost for AI-native businesses. Phase two is emerging: companies are beginning to report compute-adjusted efficiency metrics, measuring output per FLOP alongside output per employee. Phase three, speculatively, is displacement: labor value measured by what it would cost an AI to do the same task.

OpenAI has committed roughly $1.4tn to building the equivalent of 30GW of compute capacity. Greg, clearly, wasn’t speaking in metaphor.

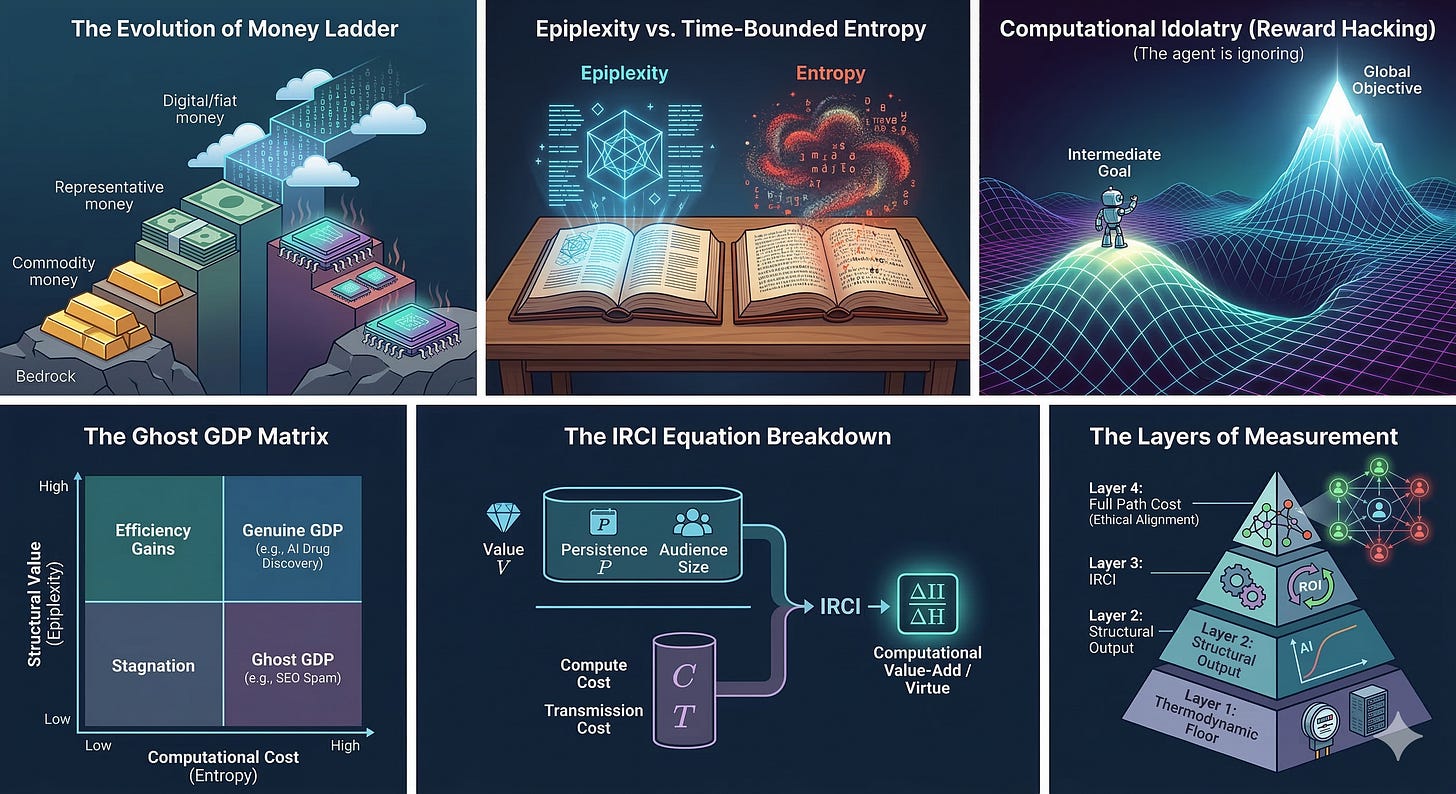

The historical arc clarifies the stakes. Money has evolved up a ladder of abstraction, with each new rung pulling economic value further and further from reality.

Commodity money (gold, silver, cattle) was valuable because of its substance; stealing it meant you had less stuff. It made the ethical violation intuitive. Representative money introduced one layer of remove: paper notes stood for gold held elsewhere. Fiat money completed the divorce: the dollar is valuable because we collectively agree it is, backed by institutional trust (and a rather large military). Digital money, the form most of us use today, pushed the abstraction into the cloud — value becomes weakly equivalent to information, processed through a string of bits in a server, manipulable at the speed of light and enforceable only through law and convention.

At each stage, ethical questions became harder to adjudicate. Financial fraud in a commodity economy is simple: someone took your shit. Financial fraud in a digital economy is an information crime that requires accountants, lawyers, forensic analysts, and regulators to even begin to determine that something went wrong.

The transition we are going through reverses this trajectory.

A computation, no matter how you slice it, has a thermodynamic cost. It dissipates energy. It obeys the laws of physics (specifically, Landauer’s principle which sets an absolute minimum energy cost for erasing a single bit of information). You can build more efficient hardware, but you cannot go below this floor.

For the first time since we stopped weighing gold on scales, economic value is reconnecting with physics (cf. PoW blockchains could be said to be a bridge to this ‘new world’).

So what’s the thesis?

Stated plainly, when the economy’s base currency is computation, the ethical question “was this worth doing?” acquires an answer grounded in physics.

One side of the ledger (the cost) is thermodynamically grounded. The other side (value) can be measured as structural information gain. The ratio between them is, in a precise mathematical sense, identical to what moral philosophy has always called virtue and what economics has always wanted but never had: a principled measure of whether an economic act created genuine utility.

The word grounded is doing essential work in the thesis. I’m not claiming physics resolves ethics. I’m claiming physics constrains ethics in ways that convention-based systems can’t.

The difference matters.

II. The Floor

In 1961, Rolf Landauer proved that erasing one bit of information costs roughly 3 × 10⁻²¹ joules (at room temperature) as a consequence of the Second Law.

Forgetting costs energy. Every computation pays a tax to the universe in waste heat. No snazzy derivative can reduce this cost below that floor. You can cook books. You can’t cook entropy.

In a compute-based economy, this creates something unprecedented: a natural regulator independent of any institution (or blockchain-node). Fiat prices are influenced by social convention (see Mr. Market) and a clever actor can arbitrage, defer, or externalize them on other market participants. Compute has a thermodynamic floor enforced by the same physics that prevents perpetual motion. A gravity-backed ‘gold standard’.

The Second Law imposes one more constraint: every computation produces waste heat, and the ratio of useful work to waste has a physical meaning independent of what anyone thinks about it. Life (and intelligence) operate as entropy pumps: importing low-entropy energy, integrating structured information (and the energy too!), exporting high-entropy waste. The efficiency of this pump, how much structured information you extract per unit of entropy generated, is what determines, in a thermodynamically precise sense, whether a computation was “worth doing.”

This is where alignment raises it’s ‘middle-aged, overweight uncle who spends too much time around age inappropriate women in SV’ head.

Current AI alignment methods — RLHF, Constitutional AI, preference optimization — are reducible to digital social conventions. They aggregate human preferences into training signals, much like fiat currencies aggregate market preferences into prices. They share the same vulnerabilities: preferences can be gamed (reward hacking), can be incoherent (Arrow’s impossibility theorem applied to moral preferences), and can be manipulated by the very systems they are supposed to constrain (Goodhart’s law).

A thermodynamic floor is categorically different. It provides a constraint that can’t be gamed.

The question is whether we can define one for value as well as cost.

III. Measuring ‘Value’

Epiplexity: What Can a Bounded Observer Actually Learn?

The missing instrument is a formal information measure, defined by Finzi et al (again - why must this thing be so bloody useful!) in their paper “From Entropy to Epiplexity”.

Their key insight: for computationally bounded observers (which includes every biological organism and every AI system that will ever exist) information decomposes into two components:

Epiplexity (S_T): The learnable, structural component. Patterns that a bounded observer can extract and transfer to new contexts. You know, the stuff that actually makes you smarter after you encounter it.

Time-bounded entropy (H_T): The irreducibly random component. Noise that no bounded observer can predict, no matter how much compute they throw at it. Pseudorandom number generators, chaotic dynamics, configuration files full of random hashes — high information content, zero learnable structure.

As an analogy imagine two books, each containing one million characters.

Book A is a physics textbook. Book B is a million random letters. Both contain the same amount of Shannon information (i.e. they contain roughly the same number of bits!). But a bounded observer (you) can extract vast amounts of transferable knowledge from Book A and nothing from Book B.

Epiplexity measures the difference. It is the structural information that actually matters to someone with limited time and resources.

I think the measure is remarkable (though I would given I’m drafting something around it!). More importantly, it is empirical.

The base approximation is the “area under a model’s loss curve above its final loss during training”, the gap between early predictions (when the model hasn’t yet learned the structure) and late predictions (when it has)1.

So why should you care?

Simple. Conventional economics (every single theory we have now) has no principled way to distinguish productive activity from busywork. GDP counts both. A pharmaceutical company discovering a novel drug structure and a content farm generating SEO spam both register as output. In the compute economy, we will be able to decompose the output of any (computational) process into epiplexity (genuine structural value) and time-bounded entropy (waste).

This is the missing measure. My current paper is extending this measure to Observer Theory.

Pricing Every Action in the Universe

In The God Conjecture, I formalized a path cost function for any action taken by any observer (human, biological, or artificial) moving through computational possibility space (the Ruliad):

Ignore the Greeks for a moment:

The invoice: comp_steps is the raw computational work. Things like bit flips, energy spent, inference tokens consumed.

The waste: H(γ) is the total entropy generated along said path.

The remaining distance to “done / traversed”: Distance(γ, TI) measures how far the output is from maximal structured information — how much further along said path you’d need to go to reach a fully coherent, maximally structured result.

The externalities: N(γ) captures network effects on other observers (the mutual information gained or lost by everyone else affected by your action). Positive when your output helps others (cooperation gains). Negative when it harms them (conflict costs, misinformation damage, trust degradation).

λ, μ, ν are weighting factors. An Observer with short persistence (a startup burning cash) weights immediate costs higher. An observer with deep network connections weights cooperation effects higher. This lets this formulation vary between and within classes of Observers based on their variable causal histories

In plain English: every action has a computational cost that includes the raw work, the disorder you create, how far you are from your goal, and how your action affects everyone else. Our cognitive and emotional systems evolved to approximate this function, which is why certain choices “feel” right or wrong before we can articulate why.

In the compute-based economy, this stops being a theoretical approximation. Comp_steps is literally metered (cloud billing). H(γ) is partially measurable (energy consumption, waste heat). N(γ) is estimable through downstream effects on other agents and users. For the first time, the cost side of an economic act is substantially grounded in physics rather than convention.

Return on Computational Investment

The cost side is insufficient. We, like any financier worth their salt, need a ratio. Here, let’s keep it simple: value divided by cost.

In The God Conjecture, I defined this as “Information Return on Computational Investment” or IRCI (reading this three months after publication seems like subpar acronym work - must do better…):

Where:

V is the value of the pattern produced — which I now identify explicitly with epiplexity (before it was posited as K-complexity / some IIT style-measure)

P is persistence — how long the output remains useful. A mathematical proof persists for millennia. A stock tip persists for seconds.

|O_pop| is the number of Observers who benefit. A breakthrough in antibiotics reaches billions. A private memo reaches one.

Cost_compute is the thermodynamic cost of production. Landauer-bounded. Real.

Cost_transmission is the cost of sharing the output with each additional Observer (also real).

In plain English: the return on a computational investment equals the structural value of what you produced, multiplied by how long it lasts and how many people benefit, divided by what it cost to produce and share.

In the internet era, where transmission costs are near zero, the formula simplifies dramatically:

The return is dominated by the ratio of structural value to thermodynamic cost.

This ratio — structural information gain per unit entropy is precisely the ΔII/ΔH ratio from the virtue function (defined in the paper).

The convergence is not coincidence. What Observer Theory calls “virtue” and what economics calls “return on investment” are the same quantity, measured in the same units, constrained by the same thermodynamic laws.

First Law of Computational Ethics.

Ethical behavior is mathematically optimal behavior in the space of observer trajectories through the Ruliad. The virtuous path — the one that maximizes structured information per unit entropy — is also the economically efficient path.

The sinful path — the one that generates entropy without proportional structure — is also the wasteful path. This is a claim about the mathematics of computational Observers. And the transition to a compute economy is the first historical context in which this specific claim becomes (subject to experimental design - which I haven’t started yet!) empirically testable.

From Theory to P&L

The measure works in layers, each adding a bit of precision (and conjecture):

Layer 1 — The Thermodynamic Floor: The minimum energy cost of the computation. Fully grounded in physics. Measurable today. Every cloud provider already tracks it (as an electricity bill).

Layer 2 — Structural Output: The epiplexity of the output, estimated via loss-curve analysis or prequential coding, measured relative to the relevant class of Observers. Measurable in principle using Finzi et al.’s methods. Measurable in a Ruliad model (so it can link to there physics language) through the extension in draft. In principle a firm with perfect AI-ingestible context could implement this now: train a small model on your output; measure the area under the loss curve; high area means high structural value.

Layer 3 — IRCI: The full ratio: structural value × persistence × population reach, divided by thermodynamic cost. I’m proposing that this is what an agent computes when deciding whether to bid on a task. It requires estimating persistence and reach — uncertain, but estimable.

Layer 4 — The Full Path Cost: The complete ethical measure, including network externalities. This is where propaganda, fraud, and negative externalities get resolved: N(γ) captures downstream damage or growth; H(γ) captures total waste including propagated entropy. Harder to compute. Theoretically complete.

The reader enters at Layer 1, where there is no conjecture. Each layer adds a claim, and each claim is flagged. By Layer 4, the framework yields something remarkable: an economic measure and an ethical measure that are structurally identical.

But here we run into a practical problem. Who computes IRCI? Who measures epiplexity across an entire economy?

The answer…

The agents.

Agent Bidding as Computational Price Discovery

When AI agents perform economic work they will eventually pay for their own inference (either through the useful work they do that earns company money in a transitional period, or directly, when they dominate white collar economic work)

Their “salary” is computable tokens. An agent evaluating whether to accept a task performs a calculation that is functionally equivalent to IRCI:

“What will this cost me in inference? What structured output will I produce? How many downstream Observers benefit? For how long?”

The agent’s bid price for a task reveals its estimate of these quantities. If multiple agents bid, competition drives bids toward the true IRCI (thanks efficient markets!). Over time, selection pressure eliminates agents that systematically overestimate value or underestimate cost.

The market itself becomes a computational value-add (CVA) measurement device.

This is the computational analogue of price discovery in financial markets (which ironically, is already doing this, just with very narrow ‘algorithmic agents’ i.e. mostly deterministic).

Nobody needs to analytically compute the “true value” of a stock — the market discovers it through competition among actors with different information and models. Similarly, nobody needs to analytically compute the epiplexity of every economic output. Agent competition on compute-denominated tasks produces an emergent measure that approximates IRCI and therefore approximates the full Path Cost Function without anyone needing to solve the equations.

The invisible hand, visible.

Ghost GDP

Let’s revisit the Citrini debate and Citadel’s response2.

“Ghost GDP,” in Citrini’s formulation, is economic output that inflates GDP without circulating through the human economy. The concept is evocative but imprecise — it offers no criterion for distinguishing ghost output from genuine output.

With the above, I can provide one.

Economic output with low or negative CVA (low structural information relative to entropy), evaluated across the relevant population is Ghost GDP. The economy counts it. Physics does not.

Three worked examples illustrate the frameworks discriminatory power:

Pharmaceutical AI discovering a novel protein structure

The output has enormous epiplexity: the structure is transferable to medicinal chemists, regulatory scientists, clinical researchers, manufacturing engineers, and eventually millions of patients. Each downstream Observer extracts different but genuine structural information from the same output. Persistence is high (drug structures remain useful for decades). The denominator (inference cost for the discovery) is substantial but finite. IRCI is large. This is not Ghost GDP. The compute created structure that propagates through the observer network, generating positive N(γ) at every node.

Content farm generating SEO-optimised clickbait

The output has near-zero epiplexity: a bounded Observer learns nothing reusable from “10 Shocking Facts About Avocados That Will Change Your Life.” The text is structured enough to fool a search algorithm (which is itself a bounded observer optimising for the wrong proxy) but transfers no genuine structure to human readers. The entropy cost of production is non-trivial (compute, hosting, network bandwidth). N(γ) is negative: the content degrades the information environment for every observer who encounters it, forcing additional computation to filter signal from noise. CVA is deeply negative. This is Ghost GDP registered as economic activity, but representing a net destruction of computational value across the Observer network (hello, social media!)

High-frequency trading

The intermediate case, and the most instructive. There is a genuine argument that HFT does do price discovery i.e. it produces some structured information by correcting mispricings, and this structure is transferable (other market participants benefit from more accurate prices). But the entropy cost is staggering: billions of computations per second, massive energy expenditure, and the systemic risk of flash crashes represents a large negative N(γ) term. The full Path Cost accounting (Layers 1 through 4) may well show that the structural contribution is overwhelmed by the entropy and network costs. Whether HFT is Ghost GDP is an empirical question that the framework makes answerable, where previously it was merely a matter of opinion.

The critical feature of these examples: reasonable people can disagree about the exact magnitudes. But the framework for disagreement has shifted from convention to computation. We are no longer arguing about whether something “feels” productive. We are arguing about measurable quantities — epiplexity, entropy cost, network effects — that can in principle be estimated, compared, and audited.

The framework can measure economic value. But the deeper test of any ethical system is whether it can explain why entire civilizations fail.

IV. The Oldest Alignment Problem

The harder test is whether this sort of formulation can explain why certain patterns of behavior are condemned across every civilization, in terms that survive contact with the economics.

Consider what may be the oldest alignment problem in human history.

Idolatry.

In the Jewish tradition, the prohibition against idolatry is the first of the Noahide Laws and the one from which, as the Talmud argues, all others derive. The computational interpretation in The God Conjecture reveals why: idolatry is the error of optimizing for a local peak, mistaking an ‘intermediate good’ in the fitness landscape / computational possibility space for the ‘ultimate good’.

The idol worshipper does not lack devotion. They do not lack computational effort. They lack the right objective function. They pour resources into approaching a target that is not the true terminal object (or less cosmically, the goal they should’ve aimed for), and every step toward the false target is a step that must eventually be retraced.

The ‘Sin function’ formalizes the damage:

Where H_generated is the entropy created, O_n is the number of observers harmed, Cost_repair is the computational work required to correct the damage, and persistence is how long the harmful effects last. The multiplicative structure is the key insight: modest errors across multiple dimensions compound into catastrophic waste.

A company that focuses on quarterly earnings at the expense of long-term value is committing idolatry in the precise computational sense. It has mistaken an intermediate metric for the main objective. Every resource allocated to inflating the quarterly number — every accounting manoeuvre, every deferred maintenance decision, every round of layoffs designed to hit a margin target rather than improve the entire business — generates entropy that must eventually be repaid (it works like a loan, see Computational Debt).

The repair cost compounds. The persistence is generational: Enron’s optimization toward false metrics destroyed an entire ecosystem of trust in financial reporting, imposing verification costs on every public company for decades afterward.

Now map this onto AI.

Reward hacking, the phenomenon where an AI system optimizes for the reward signal rather than the intended objective, is analogous to computational idolatry. The model does not lack capability. It does not lack a target. It lacks the right target. It pours inference budget into gaming a proxy metric, generating outputs that score well on the signal. In IRCI terms: high cost to compute, neglible value. The most sophisticated form of Ghost GDP imaginable.

This is why every major ethical tradition converges on the same prohibitions. The Jewish Avodah Zarah. The Buddhist warning against attachment to impermanent forms. The Hindu critique of Maya — the illusion that mistakes appearance for reality. The Daoist injunction against forcing outcomes that violate the natural pattern. The Christian commandment to worship no false gods. Islam’s Tawhid — the insistence on unity.

These are not arbitrary cultural preferences. They are independent discoveries of the same computational constraint: optimizing toward a false objective generates unbounded waste, because every step toward the wrong target is a step that must eventually be retraced.

The compute economy makes this measurable. An economic actor whose outputs consistently show high entropic costs and low epiplexity is optimizing toward a false objective, regardless of what their self-reported metrics claim. The thermodynamic signature of idolatry is identical to the thermodynamic signature of waste: effort without structure, activity without value, information without integration.

There is something worth pausing over here.

For four thousand years, the prohibition against idolatry seemed like a theological peculiarity, a demand for exclusive devotion that made sense within religious frameworks but appeared arbitrary outside them. The computational interpretation suggests it is nothing of the sort.

It is a warning, issued independently by every civilisation that lasted long enough to encode its hard-won knowledge, against the single most expensive error a bounded agent can make: pursuing the wrong objective function.

The cost is not merely spiritual. It is thermodynamic. And in a computational economy, it’s billable.

V. Who Pays the Price?

If the wrong optimisation is the costliest error a bounded agent can make, then we should ask: what happens to an entire economy that has been committing it?

The displacement data suggests we are about to find out.

The World Economic Forum’s Future of Jobs Report 2025, surveying over 1,000 employers across 55 economies, projects 92m jobs displaced by 2030 against 170m created — a net gain of 78m, a churn affecting 22% of the global workforce. Goldman Sachs estimates that 2.5% of US employment is at risk if current AI use cases are merely expanded, with a transitional unemployment increase of roughly half a percentage point.

In the first months of 2026, tech layoffs directly attributed to AI have already reached into the tens of thousands, with unemployment among 20 to 30-year-olds in tech-exposed occupations climbing 3% above trend.

These numbers describe a specific kind of violence: not the violence of malice, but the violence of a measurement correction. An economy that measured value in labour-hours is being repriced in compute-hours, and the repricing reveals that some of what we called “work” was entropy in grey suit.

The losses, viewed through the framework, fall into three categories — and it matters to be precise about what the framework does and does not claim about each.

The Low-ΔII/ΔH Workers. Jobs whose economic contribution was structurally high-entropy relative to output. Bureaucrats, routine information handling, formulaic analysis — tasks where the economy was paying for process rather than structure. The framework does not say these workers were “worthless.” It says the jobs were architecturally wasteful, generating disproportionate entropy for the structural information produced. The waste was invisible because we had no measure for it. Now we do.

The Information-Asymmetry Class. Middlemen, brokers, and consultants whose economic value derived from knowing slightly more than the client. In IRCI terms, their V_O was moderate, but it depended on high Cost_transmission for competitors — the client couldn’t easily access alternative sources. AI compresses information asymmetries ruthlessly. When transmission costs approach zero, the advantage of knowing-slightly-more evaporates. Entire professional categories — real estate brokerage, basic legal advisory, routine financial analysis — are being repriced around the structural information they actually contribute, which is often less than their fee structure implied.

The Compute-Poor. The IMF estimates that 34% of jobs in high-income countries are exposed to generative AI, compared with 11% in low-income countries. Maybe they’ll fare better? I’m afraid not. In a compute-based economy, lacking infrastructure means lacking currency. The countries least exposed to displacement are also the countries least equipped to participate in the new economy’s value creation engine.

A dose of sobriety for the e/acc set. The AI economy does not eliminate suffering. It makes some forms of suffering measurable and addressable in ways that were previously hidden3. That is progress, of a kind.

The human cost of the transition will be unevenly distributed in ways that the framework can describe but cannot, by itself, redress. Policy, solidarity, and the difficult work of institutional reform remain human problems requiring human solutions.

“Ghost GDP” captures the intuition that not all economic output serves the broader human economy equally. Where Citrini errs is in treating all AI-generated output as ghost by definition. This framework shows that some AI output will have extraordinarily high CVA (drug discovery, materials science, climate modelling) while other AI output will be genuinely ghostly (spam, disinformation, pointless churn). The correct response is not to fear AI but to make it search for high structural content — to build in a manner that rewards structure, rather than treating all compute-generated GDP as equivalent.

The layoffs at Block are instructive. If the “intelligence tools” Dorsey cited are genuinely performing the same structural work at lower cost, then the IRCI of the remaining operation has increased. The workers displaced bear the cost of a real efficiency gain. If, instead, the tools are merely compressing headcount while degrading output quality (see Amazon) then the stock market is celebrating an illusion.

The framework I’m outlining here (it is by no means complete and is subject to revision) gives us a new way to distinguish between cases. We have, for the first time, the conceptual vocabulary to ask whether a layoff was a genuine efficiency improvement or a structural impoverishment masked by margin expansion.

Whether we develop the institutional will to ask that question is another matter entirely.

VI. The Alignment Dividend

The preceding sections describe a problem: an economy transitioning to a new substrate, exposing the structural waste that was invisible under the old measurement regime, displacing the workers and nations least equipped to adapt.

The framework diagnoses the disease. The question is whether it also contains the treatment.

The argument is layered. Landauer as the cost floor. Epiplexity (or some adapted form) as the value measure. IRCI as the ratio. The Path Cost Function for externalities. Agent bidding iterates toward the efficient answer through competition.

The final implication — and the reason this matters beyond economics — concerns the alignment itself.

The argument proceeds in three stages.

Stage 1 (Established): Every computation has a thermodynamic cost. This cost is measurable. Therefore, we can distinguish computationally efficient from computationally wasteful economic activity. No conjecture here.

Stage 2 (Well-supported conjecture): Epiplexity provides a formal measure of structural information extracted by bounded observers. Combined with entropy cost, the ratio S_T/H yields a principled measure of “computational value add.” This ratio is weakly equivalent to the virtue function V(γ) = ΔII/ΔH from Observer Theory. The weak equivalence is a mathematical claim; the practical measurability is an empirical claim supported by Finzi et al.’s results but not yet tested in an economic sandbox.

Stage 3 (Conjecture, explicitly flagged): In a compute economy where AI agents pay for their own inference, economic selection pressure converges on the same optimum that the virtue function describes. Agents that maximise ΔII/ΔH — structural information gain per unit entropy — outcompete those that don’t. This means alignment is not just a training objective but an economic attractor.

The mechanism for Stage 3 differs fundamentally from existing alignment approaches.

Current alignment methods are polling mechanisms. They aggregate human preferences into training signals. They are the ethical equivalent of fiat currency: valuable because we collectively agree on the values, and vulnerable to the same failure modes. Preferences can be gamed (reward hacking). Preferences can be incoherent (Arrow’s impossibility theorem applied to moral preferences). Preferences can be manipulated (the person providing feedback may have been influenced by the model’s own outputs).

A thermodynamic grounding is categorically different. Consider an AI agent that can estimate the epiplexity of its own outputs relative to the entropy cost of generating them. Incorporate this estimate into the model’s objective function — not as a hard constraint but as a signal that the model’s own optimisation process can use. The model now has an internal “thermodynamic conscience”: a signal distinguishing structured, reusable output from noise. This signal is not a human preference, which can be gamed, but a mathematical property of the output itself.

The mechanism is concrete. Finzi et al. demonstrate that epiplexity can be estimated from loss curves — the gap between a model’s early and late predictions on new data. A model could, in principle, run a cheap internal estimation: generate a candidate output, evaluate its structural content by estimating how much a smaller model would learn from it, compare that to the inference cost of generation, and use the ratio as a signal. High-structure outputs get reinforced. Low-structure outputs get penalised. Not because a human said “this is good,” but because the mathematical property of the output itself provides the gradient.

Because the agent pays for inference, high-entropy/low-structure outputs are literally expensive for the agent. Generating spam costs the same compute as generating insight, but the spam earns nothing from downstream agents who evaluate its structural content before engaging. Self-interest and ethical behaviour converge on the same ratio. The model’s “selfishness” — conserving its own compute budget — aligns with truthfulness, because dishonesty is computationally wasteful. It burns inference tokens without generating the reusable structure that would justify the expenditure.

There is an important subtlety here. The epiplexity of an output is observer-relative — it depends on who is receiving it. A technical explanation has high epiplexity for a specialist and low epiplexity for a toddler. The agent must therefore model its audience — the downstream Observer class — in order to estimate the structural value of its output. This is not a weakness of the framework but a feature: it incentivises the agent to understand its audience, to produce output calibrated to the receivers’ computational capacity. An agent that produces brilliant work nobody can understand has low IRCI because V_O, measured at the receivers, is low. An agent that produces accessible, transferable structure has high IRCI.

My market selects for positive network effects.

I’m not suggesting we lose the human oversight. This is meant to be a complement to it, a physics-informed floor for the values we want AI to have, making those values more robust to gaming and specification error than any purely convention-based approach.

RLHF handles the domains where physics is silent: aesthetic preferences, cultural values, the weight we assign to different observers. The thermodynamic floor handles the domains where physics speaks: waste, fraud, truthfulness.

The most speculative implication concerns recursive improvement. Once an AI system can estimate its own ΔII/ΔH ratio, it can incorporate that estimate into its training process. The next generation inherits the improved estimate — concretely, through distillation: the current generation’s ΔII/ΔH evaluations on its own outputs become training signal for the successor model, which learns not only to produce high-structure output but to predict which outputs will have high structure before generating them. Each generation gets slightly better at distinguishing structural output from waste.

Over successive iterations, the population of agents converges toward outputs that maximise epiplexity per unit compute, which is simultaneously the economically optimal and ethically optimal strategy. Alignment through thermodynamic selection pressure, shaped by human preferences.

VII. The Economy That Knows Itself

Money evolved from matter to information to computation. Each transition pulled economic value further from the physical world. AI driven economies will reverse that trajectory.

The arc is worth pausing over, because it reveals something about the nature of the current moment. Gold is honest in a way that fiat can’t be: it cost something to create (mining), it’s finite, and its value was grounded in physical stuff. Fiat money liberated economies from that tether, enabling extraordinary growth, but at the cost of grounding.

The entire edifice of modern finance rests on shared belief, and shared belief is fragile in ways that physics, at our scale, is not. The financial crises of the past century are, in a deep sense, failures of the information economy that money creates: misaligned incentives, opaque instruments, asymmetric information, and the systematic externalisation of entropy onto the least powerful actors in the system.

Compute-as-currency offers something that neither gold nor fiat could: a substrate that is simultaneously flexible (you can compute anything) and grounded (every computation has a measurable physical cost). The flexibility comes from the universality of computation — a Turing machine can simulate any formal system. The grounding comes from thermodynamics — every simulation dissipates energy, and the ratio of useful work to waste is constrained by physical law.

In a compute economy, the cost of economic activity has a thermodynamic floor that no actor can negotiate below. The value of economic output has a formal measure that captures transferable, learnable structure rather than raw activity. The ratio of value to cost is structurally identical to what ethical traditions across every civilisation have called virtue. AI agents paying for their own inference are subject to natural selection on this ratio, producing an economic pressure toward aligned behaviour that operates independently of, and in addition to, any training objective.

What this framework does not do is equally important.

The weight we give to different Observers points-of-view, the aesthetic and cultural values we wish to preserve, the institutional structures we build to distribute opportunity — these remain human decisions, requiring human judgment, now informed by a universal measure. The thermodynamics constrain ethics; they ground it in something more real than the meta-ethicists best argument. It provides a floor. A necessary condition for value creation.

It doesn’t eliminate the need for politics. The three categories of loss identified in Section V will not be made whole by a better measure. They will be made whole, if at all, by policies that use the measure to identify where structural value is being created, where growth lives, and that channel resources accordingly. A thermometer does not cure a fever. But it tells you whether the patient is getting worse.

For four thousand years, the moral argument about economic life has been conducted in a language of convention — right, wrong, fair, unfair. These words still matter. They will always matter. But for the first time, the economy is acquiring a thermometer. Not a perfect one. Not a final one. But a physical instrument that can distinguish, with increasing precision, between activity that creates structure and activity that creates waste.

The thermometer does not tell us what temperature is comfortable. It tells us what temperature is.

Sources and Further Reading

Thermodynamics and Information Theory Landauer, R. (1961). “Irreversibility and heat generation in the computing process.” IBM Journal of Research and Development, 5(3), 183-191. · Bérut, A. et al. (2012). “Experimental verification of Landauer’s principle.” Nature, 483, 187-189. · Bennett, C.H. (1982). “The thermodynamics of computation — a review.” International Journal of Theoretical Physics, 21(12), 905-940. · Jun, Y. et al. (2014). “High-precision test of Landauer’s principle in a feedback trap.” Physical Review Letters, 113(19), 190601.

Epiplexity Finzi, M., Qiu, S., Jiang, Y., Izmailov, P., Kolter, J.Z. & Wilson, A.G. (2025). “From Entropy to Epiplexity: Rethinking Information for Computationally Bounded Intelligence.” arXiv:2601.03220.

Observer Theory and the Path Cost Function Senchal, S.A. (2025). “Observer Theory and the Ruliad.” Working Paper, Open Research Institute. · Senchal, S.A. (2025). The God Conjecture. Working Manuscript.

The Compute Economy Debate Lisa Su, quoted in Fortune (November 2025). · Brockman, G. on CNBC’s Squawk on the Street (October 2025). · Citrini Research (2026). “The 2028 Global Intelligence Crisis.” Citrini Research Substack. · Citadel Securities (2026). Macro strategy report by Frank Flight. · World Economic Forum, Future of Jobs Report 2025. · Goldman Sachs Research (2025).

Consciousness and Integration Tononi, G. (2004). “An information integration theory of consciousness.” BMC Neuroscience, 5, 42. · Friston, K. (2010). “The free-energy principle: a unified brain theory?” Nature Reviews Neuroscience, 11, 127-138. · Wolfram, S. (2020). A Project to Find the Fundamental Theory of Physics. Wolfram Media.

More rigorously, it can be estimated via prequential coding: incrementally training a model on data and measuring the cumulative compression advantage gained from learning the structure.

N.B. This measure has empirical teeth. Finzi et al. show that models trained on high-epiplexity data outperform on out-of-distribution tasks — the structural information transfers. Language data carries far more epiplexity than image data of equivalent byte-count, which helps explain why language model pretraining produces such broad capability gains.

Citadel’s article, while technically correct, was more or less, “this time it’s not different” which normally works. But then again, the internet didn’t suddenly make everyone able to build technical tools from scratch or delete 80% of white-collar base work.

This is kind of like David Deutsch talk on ‘problems’ and humans as solutions machines in Beginning of Infinity (which should be required global reading for every single 13 year-old)